How AI Unsettles the Apprenticeship in Astronomy

This essay is part of Observing AI Entering Astrophysics series.

Astronomy apprenticeship in the United States is facing a perfect storm. Federal funding for graduate training has contracted sharply: NSF Graduate Research Fellowship Program awards in physics and astronomy were cut roughly in half in 2025, and the proposed FY2026 budget would completely defund the Astronomy and Astrophysics Postdoctoral Fellowships program. At the same time, AI tools have begun doing work in an hour that would have taken a capable graduate student months.

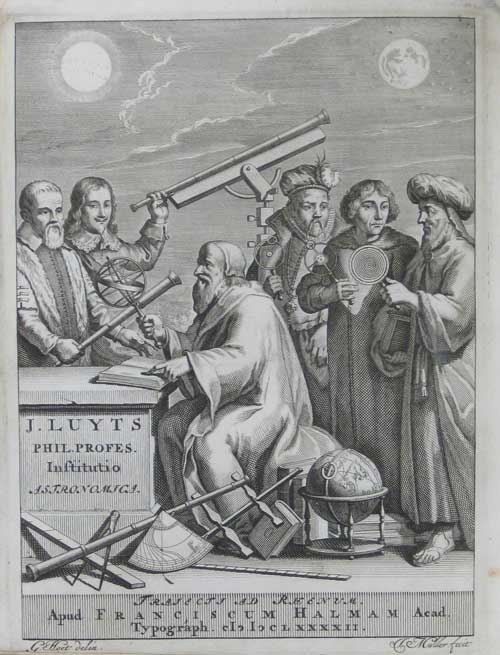

Let us be frank. Graduate students are relatively cheap and relatively untrained labor. The implicit agreement was one of apprenticeship: in exchange for lower wages, a graduate student could get experience, learn a niche craft. It was a bargain that structured how scientific knowledge passed from one generation to the next. Now, AI might undercut the labor side of that equation, and make us question how we are going to pass down the research experience.

There is a hopeful version of this story: AI could democratize access to research and training, enabling a wider set of people to do astrophysics. That possibility is real. But here we will explore the most immediate impact we are already observing.

Parallels in the Software Engineering World

We should first recognize that this issue of apprenticeship is not specific to astronomy.

As noted in the introductory post in this series, modern astronomy has already become substantially computational. A large fraction of working astrophysicists spend most of their time programming numerical models and writing pipelines for data analysis. This means that disruptions in software engineering and data work directly affect astronomers.

The disruptions in the tech sector, notably, had already begun more than a year before the onset of agentic AI in late 2025. Entry-level tech hiring fell 25 percent from 2023 to 2024. Overall U.S. programmer employment dropped 27.5 percent between 2023 and 2025. Junior developer postings fell roughly 60 percent between 2022 and 2024.

Writing in the Communications of the ACM (Feb 17, 2026), Azure CTO Mark Russinovich and Scott Hanselman, VP at Microsoft CoreAI, described how AI tools give senior engineers a productivity boost while imposing a drag on early-career developers who lack the judgment to steer and verify AI output. The prescription they offered was a culture of deliberate mentorship: "balancing automation with apprenticeship." How this prescription will be followed is something we are going to see; therefore, we do not yet have a tested model that astronomers could follow.

In my conversations with astronomers, a similar asymmetry of AI's impact on senior and junior researchers is emerging. Faculty who have embraced AI tools report that yes, they can now do in an hour what would previously have taken a capable graduate student months, while the students’ learning experience does not necessarily benefit the same way.

The AI Boost for Seniors

A faculty member in physics and astronomy at a major U.S. research university sees the appearance of agentic AI as a phase transition. On the positive side, she notes that AI-generated code tends to be properly documented and of far better quality than most astrophysicists can produce when squeezed by time and resources. As a consequence, the AI-generated code can actually be reusable in a way that hastily human-written research code often is not, and this is beneficial for open science. She certainly feels the boost. Even though there is a learning curve for prompting and instructing AI effectively, she says, that curve has to be cleared first to unlock the full potential.

Nadia Zakamska (professor of astrophysics and Vice Chair for Academics in the Department of Physics and Astronomy at Johns Hopkins University) describes a similar experience. She began working with an AI agent in early February 2026. Her goal, she said, was practical: not to test the frontier of what AI can do in the abstract, but to see whether she could do more with it in her own research.

For writing, she reports, the results were striking: she found AI "fantastic" for producing summaries, abstracts, and research proposals once she provided text samples and ideas, to the point that "I don't think I had to edit anything" — notable, she emphasizes, for someone who "really cares about my writing." It also excelled at finding relevant catalogs of astronomical data she hadn't encountered, and at navigating software installations, providing what she described as "the most gratifying experience of having a personal software assistant who translated all the error messages into English."

Yet when it came to certain debugging tasks and bringing work to a finished quality, she found herself in territory that required a great deal of what she calls "very micro-managey" oversight. And the agent made errors of strategic coherence, losing the thread of what the overall task actually was, which she found surprising: "Even a beginning student wouldn't make that mistake".

Given that these tools are only a few months old, we might expect them to improve and some of these issues to slowly go away. What is likely to persist is the frustration around mentoring and apprenticeship.

The Drag for Juniors — and What Makes Astronomy Different

This is where astronomy diverges from a simple retelling of the software story. The drag on early-career developers in tech is primarily economic: if AI can handle the tasks juniors used to be paid to do, the business case for hiring them weakens. In astronomy, that pressure exists too. But there are additional layers that are specific to how scientific research works.

The first is what David Hogg (NYU / Flatiron / MPIA) identified in a February 2026 white paper, "Why do we do astrophysics?" He argued that training a technical workforce is one of the genuine benefits of doing astrophysics — not incidental to the science, but part of its justification. And he insisted on a foundational point: people are always the ends, not merely the means. Software companies are not in the business of producing the next generation of software engineers. Research universities, at least nominally, are in the business of producing the next generation of scientists. That mission is now in direct tension with the incentive structure AI creates.

The second layer is about the nature of the work itself. As the ACM piece noted, "today's expertise becomes tomorrow's intuition." Experts get an AI boost precisely because they can implement intuition they have already built over years. The tools amplify what you bring to them. This is broadly true across fields — but astronomy has a particular version of the problem.

Yuan-Sen Ting (associate professor of astronomy at The Ohio State University), who has worked closely with AI tools for longer than most in the field, describes a specific new tension around the field's best currency: interesting problems. Once AI is powerful enough to implement an idea quickly, a professor might find themself contemplating whether to just do it themself in an hour (to avoid being scooped and to shorten the time between idea and result) or pass it to a student and face the additional overhead of mentorship. The project that would have been a formative experience, a first-author paper, a year of hard-won understanding, simply does not exist for that student when the senior researcher runs it through an agent instead. He sees this particular issue of apprenticeship as part of a larger necessity to rethink scholarship completely.

Nadia Zakamska describes a related pipeline that has worked particularly well for her. She has long collaborated with students who entered astronomy from computer science, treating astronomy, at least initially, as a sandbox for testing machine learning and other computational techniques. The project gets done by someone already proficient in computational tools, and that student, even if they do not go on into astronomy, gets valuable experience in scientific inquiry. Now, this mutually beneficial arrangement might be changed as the value of computational skills might be reassessed.

Her greater concern is for students who enter graduate school with weaker computing and writing skills. In the past, those students had time to develop good judgment through hard work and trial and error: how do you proofread your code, what plots do you make to test it, how do you organize and edit your writing? It is not yet clear how these skills can be developed by a junior scientist in AI-mediated research.

The faculty member quoted earlier points to another problem that is perhaps the most structurally important. While she feels the boost herself, she does not know how to teach graduate students to do the same. Prompting works best when you already know what you want. The skill that a junior researcher is still building is precisely that: knowing what to want, what to trust, which results to question. And her observation goes further: in her view, the threshold between experiencing AI as a drag and experiencing it as a boost comes not at the graduate student level, but much later in a career. Not even postdocs are reliably past it. If the boost requires a level of scientific maturity that takes a decade or more to develop, it does not help junior researchers at all. It is a tool that rewards a very high level of experience and is neutral to everything below it.

This is not a problem with a consensus answer yet. Those involved in postdoc hiring described sitting with genuine uncertainty about what criteria to use when evaluating candidates. Some of the qualities that used to define a strong applicant, particularly around computational skill, now feel misaligned with whatever the new landscape requires.

What Holds the Deal Together

There is unquestionable willingness among astronomers to pass on the torch. Yet the current moment makes many question whether the way they have been doing it can survive unchanged. Pretty much all see that one of the key objectives in raising graduate students is passing on intuition and taste for good science. These vague concepts are tightly interwoven with methods themselves, making it unclear what they are and how they can be taught in AI-mediated research.

The way we know how to do it now involves graduate and postdoctoral training that requires travel, underpayment, and often is mentally taxing. People enter this path for a wide range of pragmatic reasons, such as developing a transferable set of analytical and computational skills and solving their migration objectives, but also more intangible reasons, such as curiosity, vocation, the desire to be recognized as an expert (and for a good reason). AI alters pretty much every aspect of these motivations, making the future of the apprenticeship contract unclear.

These concerns of AI's sweeping effect might seem exaggerated. An alternative way of looking at this moment is through a much broader lens. Perhaps this is yet another step in gradual technological progress, and you are simply living through it, which is what makes it feel exceptional. The field has absorbed many methodological transitions before: from human calculators to computers, from machine code to high-level programming languages, from analytical models to numerical simulations. At the time, those shifts were not obviously manageable either, and whole lineages of inquiry collapsed or got greatly reduced. New methods took the place of old ones, then went through a stage of maturation, their limitations became better understood, and perhaps AI is just another one of those things that just needs a little bit of time to mature.

In the next essay in this series, we will try to examine it directly: do the epistemological changes AI introduces really mirror those earlier transitions in science? Is it more of the same, or something qualitatively different?

If you are an astronomer at any career stage and have observations to share about how AI is changing your research or training experience, I would very much like to hear from you.